Linux DMA From User Space

This page describes a prototype for a Linux User Space DMA system. This page provides the background for a newer version of the prototype at Linux DMA From User Space 2.0. Users should start designs with the newer version.

Introduction

FPGAs provide the ability to put DMA engines into the Programmable Logic (PL) in a very flexible way. The challenge quickly moves from a hardware problem to a software problem when running Linux on the target. Another challenge with DMA is that it is difficult to provide a complete solution that meets the requirements of many varying system designs.The primary goal of this page is to document and describe an existing DMA example which has been updated to be relevant to the latest release of Xilinx products (2018.1, 4.14 Linux kernel). A secondary goal is to provide enough background for the user to understand the example by pulling together a number of related DMA topics with their associated documentation.

This page assumes the reader has a reasonable knowledge of embedded Linux together with some knowledge of Linux kernel device drivers and user space applications.

Background Knowledge

Coherency

Software coherency is defined as the CPU software is required to perform cache maintenance functions to ensure that the memory system is coherent with the CPU caches. The cache maintenance functions can require significant CPU cycles.Zynq 7K is s/w coherent when using the HP ports and hardware coherent when using the ACP port. The ACP port reads and writes directly into the CPU caches such that the caches and memory are coherent. The ACP port has restrictions on the transactions which can be performed such that it does not work for all applications.

Zynq UltraScale+ MPSOC is software coherent by default (based on the 2018.1 release) when using the HP and HPC ports. It can be made h/w coherent in the system configuration and with cache coherent transactions with the HPC ports. It also has the ACP port which has similar functionality as Zynq 7K.

Linux device drivers which are written in a typical manner with the kernel DMA APIs work in both s/w and h/w coherent systems. The device tree specifies whether a device is h/w coherent such that kernel DMA APIs know whether cache maintenance is required for the system.

Cached versus Non-Cached Memory

Non-cached memory tends to be used for performing DMA operations. Cached or non-cached memory is a system issue that should be based on the size of the data being transferred with DMA together with the amount of data touched by the CPU software. Large data sets going thru the CPU caches may have performance effects on the CPU software while smaller data may not. Cached memory may not be needed if the CPU software minimally touches the data of the DMA operation.Kernel Space versus User Space For DMA

Linux device drivers are typically kernel drivers. Some users want to create user space drivers for a number of valid reasons. The design illustrated on this page provides hybrid approach which uses a kernel driver and a user space application.DMA In Linux

The Linux DMA Engine Framework

Linux provides a framework that allows most DMA hardware to be supported in a general way. The framework, known as the DMA Engine, provides the infrastructure for DMA drivers to plug into and then be accessed from kernel space with another client driver using a standard API. The framework is designed to work with many different forms of DMA including memory to memory such as the AXI CDMA and memory to device / device to memory for the AXI DMA. It also supports scatter gather.Documentation for the DMA Engine: https://github.com/Xilinx/linux-xlnx/tree/master/Documentation/dmaengine, Bootlin (Free Electrons) DMA Engine Document

The Linux DMA Engine source code: https://github.com/Xilinx/linux-xlnx/tree/master/drivers/dma

A test client is also provided for reference. It is complex as it is intended to be a test rather than a simple example of using the DMA Engine API.

DMA Engine test source code: https://github.com/Xilinx/linux-xlnx/blob/master/drivers/dma/dmatest.c

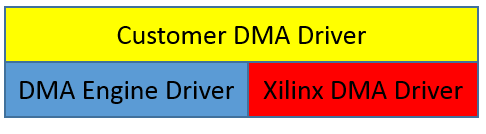

Xilinx Provided DMA Drivers

Xilinx has many DMA engines that may be used in many applications other than embedded, such as in IP blocks like PCIe. This page covers principles that apply to the more general purpose DMA such as the soft IP DMA for the PL. Xilinx provides Linux drivers for the general purpose DMA. The drivers use the Linux DMA Engine subsystem and provide the ability for a user to write their own Linux driver which uses the Xilinx driver in kernel space through the DMA Engine subsystem.The Xilinx Linux Drivers wiki page,Linux DMA Drivers on Xilinx Wiki, provides details for each of the Xilinx drivers including the kernel configuration and test drivers.

Linux Kernel APIs

The kernel APIs, such as memory allocation for DMA, are well documented and are required when writing a driver which uses DMA.Linux DMA API: https://www.kernel.org/doc/Documentation/DMA-API.txt

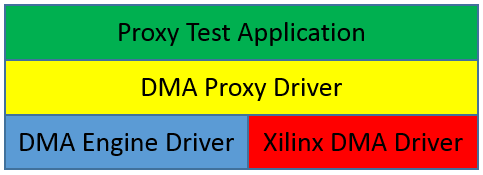

DMA Proxy Design

The DMA Proxy design provides 2 simple examples. It is designed to be simple to help users get a clearer understanding of the DMA Engine.1. It illustrates how to use the Xilinx provided DMA driver for AXI DMA through the Linux DMA Engine.

2. It illustrates a simple method of DMA from user space.

The design consists of a kernel driver module and a user space application.

Design Details

The kernel driver is implemented as follows.- It uses dmam_alloc_coherent() to allocate DMA memory which is non-cached for a s/w coherent system. The memory is to be shared with user space for DMA buffers.

- It provides a character interface to user space to allow an application to map the kernel memory into user space and initiate DMA transactions. The ioctl function starts the transaction and waits for it to complete such that it is a blocking interface.

- It controls the transmit and receive channels of the Xilinx DMA driver through the DMA Engine.

- It provides an internal test mode which allows the kernel driver to test a single DMA transfer without any user space application. This helps when testing changes to the kernel driver. It is controlled with a kernel module parameter.

The user space application is implemented as follows.

- It accesses the kernel driver thru character device nodes, one for each DMA channel (transmit and receive).

- It maps the kernel memory buffer into user space such that no copy between kernel and user space is required.

- It uses the kernel memory for DMA transmit and receive buffers.

- It initiates DMA transactions and waits for their completion through the driver ioctl function.

Hardware Design

The AXI DMA is connected to the HPC port of MPSOC even though the system is not using cache coherent transactions or setup to be h/w coherent. The transmit stream is looped back to the receive stream. The DMA options include scatter gather. The width of the buffer length register can allow larger transfers.Source Files

The source files are located in the GIT repository for the newer version of the wiki page at Linux DMA From User Space 2.0.

Changed From Previous Design

The DMA Proxy Design was available in the community based on a 3.14 Linux kernel. The following more significant changes have occurred in the current design.• Removed cached memory option

• Changed from dma_common_mmap() to dma_map_coherent() as the former was not working.

• Changed the driver to a platform driver which relies on a device tree node

• Changed internal test mode from conditional compilation to a kernel module parameter.

Alternative Designs

DMA Proxy Design for AXI CDMA

The same DMA Proxy Design can also easily support AXI CDMA. The primary difference is that there is only one channel for a CDMA as it is doing memory to memory DMA transactions. The memory to memory DMA transactions require a different API to the DMA Engine when prepping the buffer to be sent. Since there is only one channel, the shared memory between the application and driver is then partitioned with source and destination buffers.User Space Only Without Kernel Driver

Another simple design is a user space application/driver through the UIO framework. The Xilinx SDK provided bare metal drivers can be used in a Linux UIO application. The drivers are not currently tested in this manner. The challenge for this design is still memory allocation of non-cached contiguous DMA memory which is typically done in a kernel driver. There are open source driver examples which can perform this function such as https://github.com/ikwzm/udmabuf.© Copyright 2019 - 2022 Xilinx Inc. Privacy Policy